Are IT Support’s Customer Satisfaction Surveys Their Own Worst Enemy?

OK, it’s a bit of a clickbait-y title – but we need to question whether the current “handling” of IT support customer satisfaction (CSAT) surveys seriously affects their uptake and value. And not in a good way – with it common for IT service desks to receive a very low level of survey feedback (<10%).

So, is it time to take a step back from the customer satisfaction survey status quo to understand what’s needed to make customer satisfaction surveys more valuable (and, in some situations, just valuable)?

Death by Customer Satisfaction (CSAT) Surveys

Let’s start with you: How often do you fill in CSAT surveys?

Or, if it’s easier to answer, what proportion of the surveys you get emailed do you fill in? I bet you delete more than you fill out. And, if you’re anything like me, when the email boldly states: “This survey will only take you 10 minutes” you’re probably going to click the delete-email button within seconds. Especially when everyone and their dog wants to get your feedback these days.

Thus, you can probably understand why people are so hesitant to complete CSAT surveys, especially when it takes longer to undertake than the original transaction it refers to.

Then there are the scenarios where you experience “end-of-the-spectrum customer service” – i.e. extremely great or extremely poor customer service – and you feel compelled to provide feedback.

This is ultimately just about understanding good old-fashioned human behavior. So why do we make it so hard for customers (of our IT services and support) to provide their feedback? Or easy for them not to.

The Common Barriers to CSAT Feedback

You could probably quickly name a few – maybe some that can be found in your organization. And sadly, they’re still there despite the IT service desk CSAT surveys getting a less-than 10% response rate.

It’s almost as though people aren’t really bothered about the feedback itself. Well, not when the feedback scores are high enough to hit the required service level agreement (SLA) target.

Putting this misfocused mindset to one side, it’s not difficult to deal with many of the common barriers to eliciting survey feedback. For instance:

- The timeliness of the feedback request – because people are more likely to respond when they’ve just been helped (whether in a good or a bad way).

- The ease of survey completion – there’s some good advice from Aale Roos available here.

- Recognizing that people are busy – as your survey will be competing with a long to-do list, make it worth their while by using incentives (in fact it’s one of Aale’s tips).

- People assuming that nothing will be done with their feedback – i.e. that completing the survey would be a waste of their time.

None of these are unsurmountable barriers. In this blog, I want to look at the last one in more detail.

A Personal-Life Example

I use a certain online retailer a lot – it’s the convenience more than anything, from searching through to paying. I also moan (on social media) about the quality of their delivery drivers a lot. Because they see me as a front door to throw the package through rather than as a customer of their employer.

However, I’m stuck – and have said this to the retailer’s customer service staff – if I could find a company similar to them with a better delivery service I would jump ship in a heartbeat. My moaning on social media and emails to the retailer’s customer service team seem to achieve very little though, other than allowing me to “vent my spleen.”

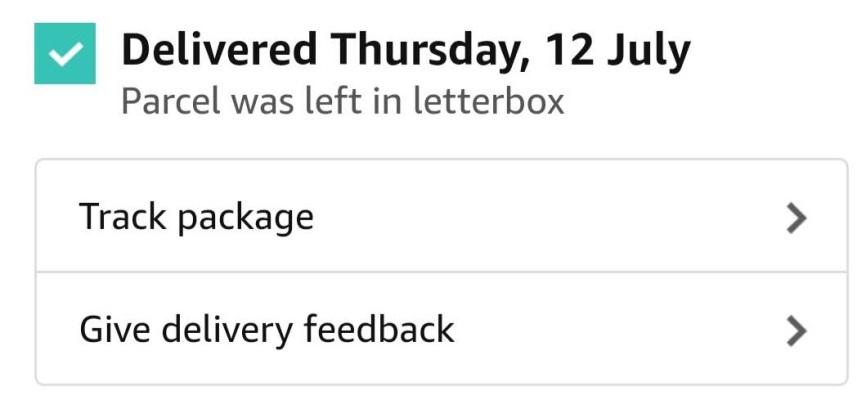

So, imagine my joy when I found a delivery driver feedback mechanism “hidden” within the retailer’s mobile app.

Finally, a way to collectively educate the retailer on the quality of its “own brand” delivery service.

Well, not exactly. Or at least that’s what it seems.

One of my first pieces of feedback, via this route, was that a delivery driver had left two packages outside my front door ON THE STREET. Yes, on the pavement and on a main road! They also had the audacity to state that they had left them in my nominated “safe place.”

To the Batmobile – sorry, retailer’s mobile app – I thought. And I duly left negative feedback. Then I waited. And waited. No response, just the usual “We think you’d like a new tablet” alerts on my phone.

After a few days I checked with the retailer about the response I expected to my extremely poor feedback. Seems that if I wanted a response I also needed to contact customer service!

Do I feel it was worth my while providing the feedback? No. Am I likely to provide negative feedback again via this official route? No – I’ll just contact customer service.

So, what happens with CSAT feedback on your IT service desk? More importantly, do customers know what it’s doing with their feedback (such that they are likely to provide feedback again)?

Feedback Handing in the IT Support Industry

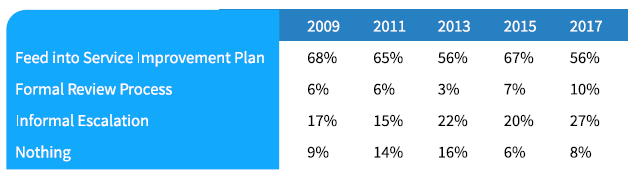

The Service Desk Institute (SDI) Service Desk Benchmarking Report 2017 offers up some interesting insights into what happens with CSAT feedback, as shown below:

And this is based on the 97% of IT service desks collecting customer feedback (this was another survey question).

While it’s good to see half of IT service desks using the customer feedback for improvement activity, albeit with this being the lowest level in a decade, it’s worrying that half don’t use the aggregated information for improvement purposes. After all, in the wise words of IT service management (ITSM) legend Ivor Macfarlane: “Customer feedback is free consultancy, so why wouldn’t you use it?”

It’s also worrying that one in twelve organizations do nothing with the feedback data they collect. I guess they are just happy that SLA targets are being met.

Closing the Customer Feedback Loop

Sadly though (and perhaps an opportunity for the next SDI Benchmarking report), the statistics don’t show whether the person giving the feedback is responded to based on a consistently-applied policy.

Whether it be an apology, the provision of additional help, or a thank you. With the customer informed of any action that will be taken – from the redesign of operational policies and practices to the individual recognition of those fairing well in the received feedback.

As an aside, and in the context of the above example, I was recently told by a delivery driver from another delivery firm that they receive a nice “bonus” (on a rewards card) when good feedback is received about the service they provide (from customers using various channels including Twitter).

Knowing that something tangible is, rather than isn’t, happening with the feedback you provide surely motivates you to provide it. So why should it be any different with IT service desk customers?

And, in addition to increasing the level (and accuracy) of customer feedback there’s most likely a need to resolve customer issues highlighted with the feedback. For example, the Happy Signals’ employee-experience happiness data shows that “My ticket was not solved” is the second highest driver of employee unhappiness (with IT support) at 33% of unhappy employees.

If you stop to think about it – your IT service desk’s CSAT survey should be the means of improving your service (and the employee experience), and something that encourages people to provide even more feedback, not just the means for understanding how well it’s doing.

So, what about your IT service desk’s CSAT feedback mechanism and outcomes? Have you closed the loop (and addressed the other opportunities to encourage more customer feedback)? What advice would you share with others?